Research and development on robots continues to snowball and gain momentum, with 20 year projects ahead of many engineers and managers in the field. But can they get faster? Work alongside humans? Be jet-washed and sanitized? Be simpler to operate? Will they ever have the capability to think like a human?

Furiously fast

“We’re actually pushing boundaries of 20G speeds with new research; up from regular speeds of 12G…We are working on the stability of production at speed,” said Roy Fraser, global product manager for Robotics at Bosch.

“A robot should be as simple to use as a smartphone: Roy Fraser, Bosch”

US robotics specialist FANUC Robotics is also working on speed through a new technology called Gakushu or ‘learning robot technology’.

“We generally aim to make the robot operate 8-15% faster than what a human expert programmer can do,” said Dick Motley, senior account manager for North American Packaging Distribution at FANUC Robotics.

Motley said the technology, launched around nine months ago, tracks data such as robot behavior, movement and vibrations and then uses a computer algorithm to assess and optimize these.

But Fraser said the future of robotics is not just about speed. “We’re looking right across our core competencies; so how to reduce cost per pick, working to be more hygienic, increase output and make robots easier to use.”

He said Bosch is also focused on software advances. “People don’t care about the technology; they just want it to be easy to use…A robot should be as simple to use as a smartphone.”

Eye robot

Motley said FANUC is focused on developing vision capabilities in its robots.

“We are constantly working on vision – to chase that elusive goal of being as good as the human eye…The human eyes and brain are phenomenal. When you try to emulate these, you never come close.”

Vision tracking – where camera shots define targets for robots to pick up objects – is a really powerful enabler in confectionery packaging, he said.

“There are two facets to this; one is quality, the other is that it’s an up-time enhancer.”

“The human eyes and brain are phenomenal. When you try to emulate these, you never come close: Dick Motley FANUC”

Vision enables robots to identify ‘bad’ products and avoid packing them, he said, although attempting to emulate human vision in robots is not easy.

“What is pretty vital to vision applications is getting the lighting, lenses and optics correct. The right conveyor belt for a good color contrast is also essential. It can be a big challenge.”

Getting to grips

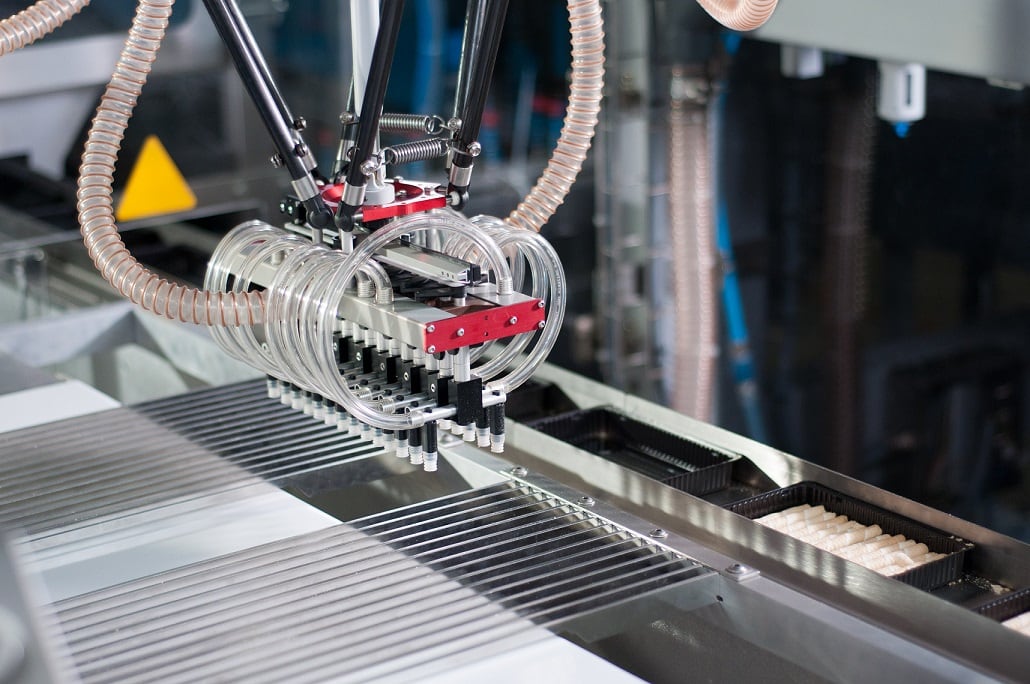

Coming into play alongside vision is the challenge of ‘gripping’ confectionery items, said Andrew Howden, project manager at UK consultancy the Centre for Food Robotics and Automation (Cenfra).

“The gripper is critical for a robot to succeed. The better the grip, the faster and more flexible the robot is.”

Fraser said the gripper design must suit the product. “The challenges are about the product itself and understanding the handling of it.”

Shape, weight and fat content all factors that will determine what gripper should be used when directly handling a confection at a primary packaging stage. For secondary packaging he said that unusual physical affects like the coriolis effect [turning force] can occur and must be managed.

Howden said newest research is into non-contact grippers, where the confection jumps off the conveyor onto the end defector (gripper).

Squeaky clean

He added that the ability to wash robots is another R&D trend. “Robots are not all fully water-proof and are often encased in screens particularly at a primary packaging stage…There is a shift towards wider use of stainless steel in robots, using different types of alloys and making them smaller for a full wash down.”

Motley said FANUC is “hardening robots for sanitation”. The company has developed two robots with an IP69K protection rating that certifies exposure to high pressure water hoses for cleaning.

“We’re elevating our game a bit and getting our robots to operate in harsh cleaning environments.”

Not only are robots being geared up for harsh cleaning environments, there is also a growing body of R&D work pushing to develop robots that can work safely alongside humans.

“In primary packaging we will start to see more robots with humans I think: Andrew Howden, Cenfra”

“Research is looking into new safety systems and making robots smaller to work alongside humans. But at the moment these robots can only run at low speeds. Future research must look into developing speed and movement detection – so the robot can sense what’s going on around it – what the humans alongside it are doing,” Howden said.